New study finds AI bias while adoption outpaces oversight

Why this story

Stanford study on expert-public opinion gap provides different research angle from previous AI worker replacement coverage.

OPTIMIST VIEW

Public AI fear stems from knowledge gaps, not actual risks—education fixes it.

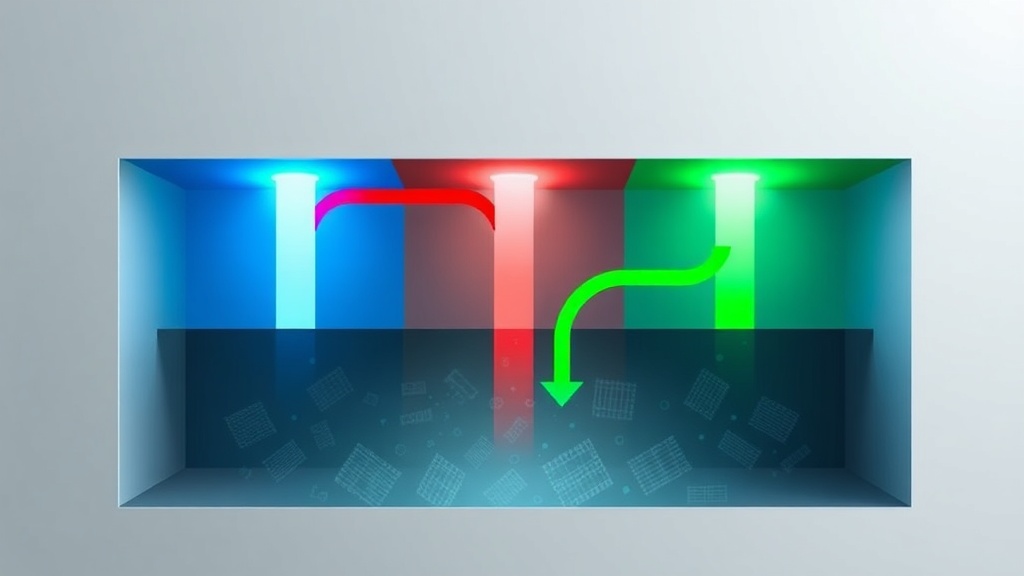

SKEPTIC VIEW

AI models embed center-left bias invisibly across platforms, shaping opinions.

INDUSTRY REALITY

Corporate AI adoption outpaces oversight, creating massive liability exposure.

Hidden Truth

Hidden truth: While everyone debates AI bias and public perception, the Stanford AI Index reveals a more uncomfortable reality: the expert-public divide isn't about bias or fear—it's about…Read the full breakdown →

Should AI companies be required to publish bias testing results before deployment?